Power Transformers: The Hidden Bottleneck in AI Compute Expansion and Data Center Power Demand

The rapid expansion of AI Compute infrastructure is fundamentally reshaping global energy demand. As hyperscale AI data centers scale to support large language models, autonomous systems, and high-performance computing (HPC), their electricity consumption is increasing at an unprecedented rate. However, a critical constraint is often overlooked: the power transformer.

From grid interconnection to voltage regulation and load balancing, transformers serve as the backbone of data center power systems. Yet, their production lead times, design limitations, and deployment challenges are emerging as a hidden bottleneck in AI infrastructure growth. This article provides a technical and commercial analysis of transformer constraints and explores practical solutions for global buyers, engineers, and energy planners.

1. The Explosive Growth of AI Data Center Power Demand

The artificial intelligence revolution is fueling an unprecedented surge in data center electricity consumption. Global data center power demand is projected to double from around 415 TWh in 2024 to approximately 945 TWh by 2030, according to the International Energy Agency, growing at roughly 15% annually—more than four times faster than the rest of the economy. In the United States alone, data centers consumed about 4.4% of total electricity in 2023 and could reach 6.7–12% by 2028, with hyperscale facilities driving demand from roughly 50 GW to over 134 GW by 2030.

This explosive growth is largely powered by AI-optimized servers and high-density GPU clusters required for training and inference of large language models. Unlike traditional servers, AI workloads demand massive parallel computing power, pushing individual racks from 5–15 kW to over 100 kW and entire campuses toward gigawatt-scale loads. As a result, power infrastructure—including generation, transmission, and critical equipment like power transformers—has become the primary bottleneck, with lead times stretching to 2–4 years and utilities struggling to keep pace.

AI-driven workloads are significantly more power-intensive than traditional computing.

Table: Power Consumption Comparison

|

Workload Type |

Average Power Density (kW/rack) |

Growth Trend |

|

Traditional IT |

5–10 kW |

Stable |

|

Cloud Computing |

10–20 kW |

Moderate |

|

AI Training (GPU/ASIC) |

30–100+ kW |

Rapid |

Technical Insight:

Modern AI data center power consumption can exceed 100 MW per facility, with some hyperscale campuses targeting 1 GW clusters. This exponential growth places immense pressure on substation transformers and upstream grid infrastructure.

2. Role of Power Transformers in AI Infrastructure

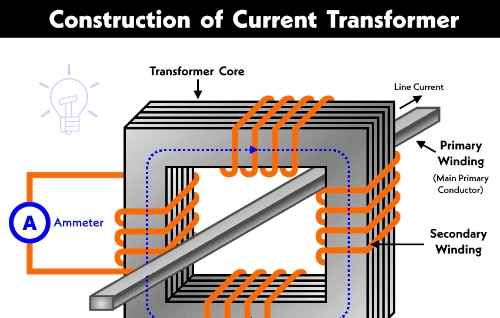

A power transformer is responsible for stepping the voltage up or down between generation, transmission, and end-use systems.

Core Functions:

- Voltage transformation (e.g., 220kV → 33kV → 11kV)

- Load isolation and protection

- Power quality stabilization

- Grid-to-data center interfacing

Practical Engineering Context:

In AI data centers, transformers must handle:

- High load variability (due to fluctuating AI workloads)

- Harmonic distortion (caused by power electronics)

- Continuous 24/7 operation under peak load

This creates stringent design requirements beyond conventional industrial applications.

3. Why Transformers Are Becoming a Bottleneck

3.1 Manufacturing Lead Times

Large-scale power transformers (≥100 MVA) often require:

- 6–18 months production time

- Specialized materials (e.g., grain-oriented silicon steel)

- Complex logistics and testing

Table: Transformer Supply Constraints

|

Factor |

Impact on AI Expansion |

|

Long production cycles |

Delays data center commissioning |

|

Limited global suppliers |

Price escalation |

|

Material shortages |

Capacity bottlenecks |

|

Custom design requirements |

Increased engineering complexity |

3.2 Grid Infrastructure Limitations

Existing grid systems were not designed for concentrated AI loads.

- Power grids lack capacity for hyperscale facilities

- Urban substations face space constraints

- Transmission upgrades lag behind demand

Engineering Challenge:

Upgrading grid infrastructure requires new substation transformer installations, often involving regulatory approvals and long construction cycles.

3.3 Thermal and Efficiency Constraints

Transformers in AI environments operate near full load continuously.

- Increased thermal stress

- Higher insulation aging rates

- Reduced operational lifespan if poorly designed

Solution Direction:

Use energy-efficient transformers with:

- Advanced cooling systems (ONAN, ONAF, OFAF)

- Low-loss core materials

- Digital monitoring systems

4. Transformer Design Requirements for AI Data Centers

As artificial intelligence drives hyperscale data centers toward gigawatt-scale power demands, power transformers have become a critical bottleneck in modern electrical infrastructure. Traditional designs struggle to support the extreme power densities of AI workloads, where GPU clusters push rack loads from 15 kW to over 100–240 kW and entire facilities routinely exceed 100 MW.

Key transformer design requirements for AI data centers now include high-efficiency operation under continuous near-full load, superior harmonic handling to manage non-linear GPU power supplies, exceptional voltage regulation with fast response to sudden load spikes, and enhanced thermal management for sustained high-density operation. Operators increasingly demand dry-type or advanced liquid-immersed transformers with low losses, high short-circuit withstand capability, and compatibility with emerging 800V DC architectures and solid-state transformer (SST) technologies.

Table: Key Design Requirements

|

Parameter |

Requirement for AI Data Centers |

|

Load Capacity |

High continuous load (>90%) |

|

Efficiency |

≥99% to reduce energy loss |

|

Harmonic Tolerance |

Enhanced winding design |

|

Cooling System |

Forced oil/air cooling |

|

Redundancy |

N+1 or 2N configurations |

Practical Insight:

Transformers must be designed for non-linear loads, which differ significantly from traditional industrial loads.

5. Integration with Renewable Energy Systems

The explosive growth of AI data centers is creating unprecedented electricity demand, projected to push global data center consumption toward 945 TWh by 2030, with AI workloads as the primary driver. To meet this surge sustainably, AI integration with renewable energy systems has become essential for hyperscale operators seeking reliable, low-carbon power.

Advanced AI algorithms now optimize renewable integration by forecasting solar and wind generation, dynamically balancing intermittent supply with battery storage, and enabling intelligent demand response that shifts AI workloads to periods of peak renewable availability. AI-powered microgrids, predictive maintenance, and real-time energy management systems improve efficiency by over 15%, reduce curtailment, and enhance grid stability while supporting 24/7 high-density GPU operations.

Role of Solar Transformer:

- Connect solar farms to the grid

- Stabilize intermittent energy input

- Enable hybrid energy systems

Integration Challenge:

Renewables introduce:

- Voltage fluctuations

- Frequency instability

- Reverse power flow scenarios

Engineering Solution:

Deploy smart transformers with real-time monitoring and adaptive control.

6. China Transformer Manufacturer: Global Supply Perspective

China plays a critical role in the global transformer supply chain.

In 2026, as the AI data center boom and global energy transition drive unprecedented electricity demand, China transformer manufacturers have emerged as the backbone of the worldwide power infrastructure supply chain. Controlling approximately 60% of global production capacity, Chinese companies are the dominant force in power transformers, with exports surging 36% year-on-year to a record 64.6 billion yuan ($9.3 billion) in 2025.

While Western markets face severe shortages—up to 30% supply gaps and lead times stretching 2–4 years—China transformer manufacturers offer significantly shorter delivery cycles, competitive pricing, and advanced designs tailored for high-density AI loads, renewable integration, and grid modernization. Major players like TBEA, China XD Group, and others have order books filled through 2027, supplying hyperscale data centers, utilities, and renewable projects across the US, Europe, Middle East, and Asia.

Advantages:

- Large-scale production capacity

- Competitive pricing

- Increasing technological sophistication

Buyer Considerations:

- Compliance with IEC/IEEE standards

- Quality control and testing capability

- Export experience and logistics

Strategic Insight:

Partnering with a reliable China transformer manufacturer can reduce lead times and cost, but requires strict vendor qualification.

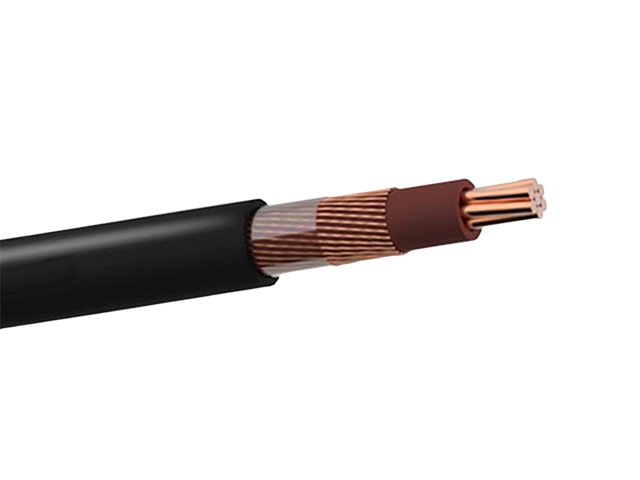

7. Cost Analysis: Transformer Impact on Data Center CAPEX

Transformers represent a significant portion of the electrical infrastructure cost.

Table: Cost Contribution

|

Component |

% of Electrical CAPEX |

|

Power Transformers |

20–30% |

|

Switchgear |

15–25% |

|

Cabling & Distribution |

10–20% |

|

Backup Systems (UPS, Gen) |

20–30% |

Insight:

Delays or cost increases in power transformers can directly impact project ROI and deployment timelines.

8. Emerging Solutions to the Transformer Bottleneck

8.1 Modular Transformer Systems

- Faster deployment

- Scalable architecture

8.2 Digital Transformers

- Real-time monitoring

- Predictive maintenance

- Reduced downtime

8.3 High-Efficiency Core Materials

- Amorphous metal cores

- Loss designs

9. Practical Procurement Strategy for Global Buyers

Step-by-Step Approach:

- Forecast power demand accurately

- Select an appropriate transformer capacity

- Ensure compliance with local standards

- Evaluate supplier production capability

- Plan logistics and installation timeline

Table: Supplier Evaluation Criteria

|

Criteria |

Importance Level |

|

Technical capability |

High |

|

Certification |

High |

|

Delivery time |

Critical |

|

Cost competitiveness |

Medium |

|

After-sales support |

High |

10. FAQ Section

Why are power transformers critical for AI data centers?

They enable voltage regulation and stable power delivery, which is essential for high-density computing.

What is the biggest challenge in transformer supply?

Long manufacturing lead times and limited global production capacity.

How does AI affect power consumption?

AI workloads significantly increase AI data center power consumption, often exceeding traditional infrastructure limits.

Can renewable energy fully power AI data centers?

Partially, but requires integration with solar transformers and grid stabilization systems.

Conclusion

As AI continues to drive exponential growth in computing demand, the role of the power transformer becomes increasingly strategic. No longer just a passive component, it is now a **critical enabler—and potential bottleneck—**in global AI infrastructure expansion.

From manufacturing constraints to grid limitations and advanced design requirements, the challenges are complex but solvable. By adopting modern engineering practices, leveraging global supply chains (including China transformer manufacturers), and investing in next-generation technologies, stakeholders can overcome these bottlenecks.

For engineers, developers, and procurement professionals, understanding transformer dynamics is no longer optional—it is essential for staying competitive in the age of AI.

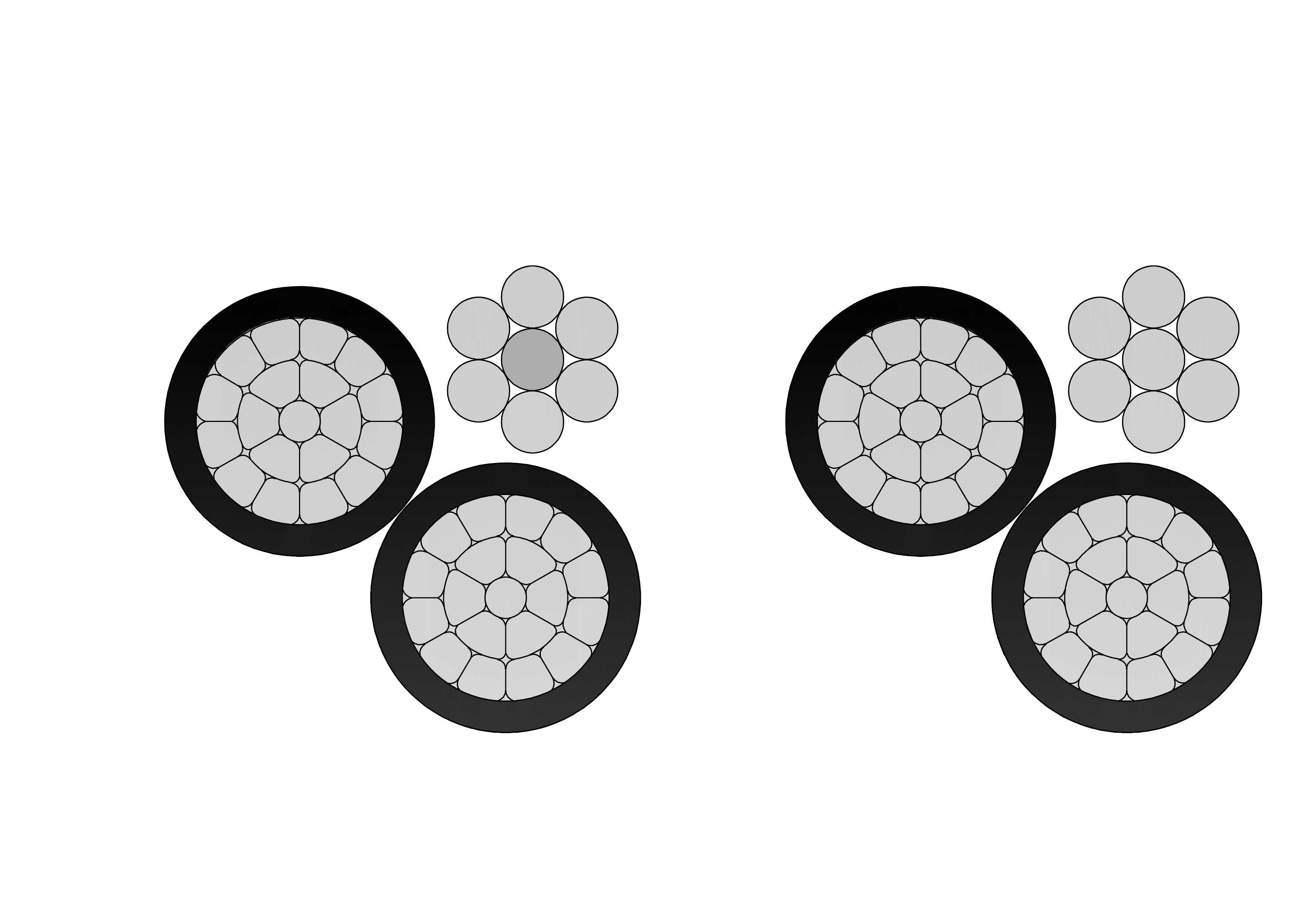

2Y-high-voltage-power-cable-2.webp)